Key GPT-4o Facts You Need to Know: Editor’s Choice

1. GPT-4o can process and generate text, audio, image, and video inputs and outputs—all multimodal modalities are trained on a single network. (OpenAI, 2024)

2. GPT-4o is 50% cheaper and much faster in the API than the previous top model (GPT-4 Turbo). Yet it is still one of the most expensive models in the market right now. (OpenAI, 2024)

3. It can respond to audio inputs at response rates that are similar to human response time in a conversation. (OpenAI, 2024).

4. When using GPT-4o, ChatGPT Free users will now have access to features such as getting responses from both the model and the web. (OpenAI, 2024)

GPT allowing real-time internet access has been a long-awaited update!

Another awaited update was the memory, or the ability to remember previous conversations that you had with ChatGPT.

5. Memory: Memory with ChatGPT helps you remember things across all chats. It saves you from having to repeat information and makes future conversations more helpful. (OpenAI, 2024)

Memory is now available to ChatGPT Free, Plus, Team (except those in Europe or Korea), and Enterprise users.

6. It matches GPT-4 Turbo performance on text in English and code. This enhanced version also parses text in non-English languages. (OpenAI demo, 2024)

7. GPT-4o can capture salient features like tone, multiple speakers, and background noises, and it can even output the same. (OpenAI demo, 2024)

Voice mode that sounds like a human interlocutor was shown in the demo as well!

8. Voice model: Sam Altman made a statement on X saying that first users will start to get access to GPT-4o Advanced Voice by the end of July, but this will be a limited “alpha” rollout.(Sam Altman on X, 2024)

Free users still have to wait patiently for the next announcement.

9. Here is a quick and creative exposition of its capabilities on the OpenAI Blog:

You can see that it not only has tasks that automate routine work like lecture summarization, but it also has inventive emerging capabilities like Poetic typography and Visual Narratives.

10. Evaluation Scores: This model sets new high scores on previous LLM benchmarks, which you can see further down the article. (OpenAI, 2024)

11. Language Tokenization: Improved token compression across 20 languages, reducing the number of tokens needed. (OpenAI, 2024)

What is GPT-4o?

GPT-4o is the newest flagship model released by OpenAI in July 2024.

GPT-4o (“o” for “omni”) is a step towards much more natural human-computer interaction—it accepts as input any combination of text, audio, image, and video.

It also generates as output: any combination of text, audio, and image.

The main feature of this model is that instead of relying on separate networks for various data types like text and audio, GPT-4o utilizes a unified neural network.

This allows it to analyze audio nuances like background noise, multiple speakers, and even emotional tones or inferring feelings from voice data.

Watch the introductory video by OpenAI, showcasing model capabilities here: Introduction to GPT-4o.

How Accurate is GPT-4o?

So, how accurate is this new model?

The accuracy of the new GPT-4o varies with different tests. Let us look at familiar scholastic exam results to see whether the model outputs accurate responses.

With the evals conducted, GPT-4o demonstrates improvement in understanding and generating complex multimodal data, outperforming its predecessors on various benchmarks.

Here, GPT-4 dominates with an accuracy of 90.00% on USMLE, with GPT-4o at 83.05% follows.

The USMLE is a three-step test series that medical graduates must pass to practice medicine in the U.S. It evaluates medical knowledge, principles, and patient-centered skills.

GPT-4o has the highest accuracy of 85.39% on the CFA level 1 Exam.

The CFA Program is a three-part exam that tests the fundamentals of investment tools, valuing assets, portfolio management, and wealth planning.

The CFA Program is typically completed by those with backgrounds in finance, accounting, economics, or business.

The SAT is a right-of-passage entrance exam used by most colleges and universities to make admissions decisions in the US.

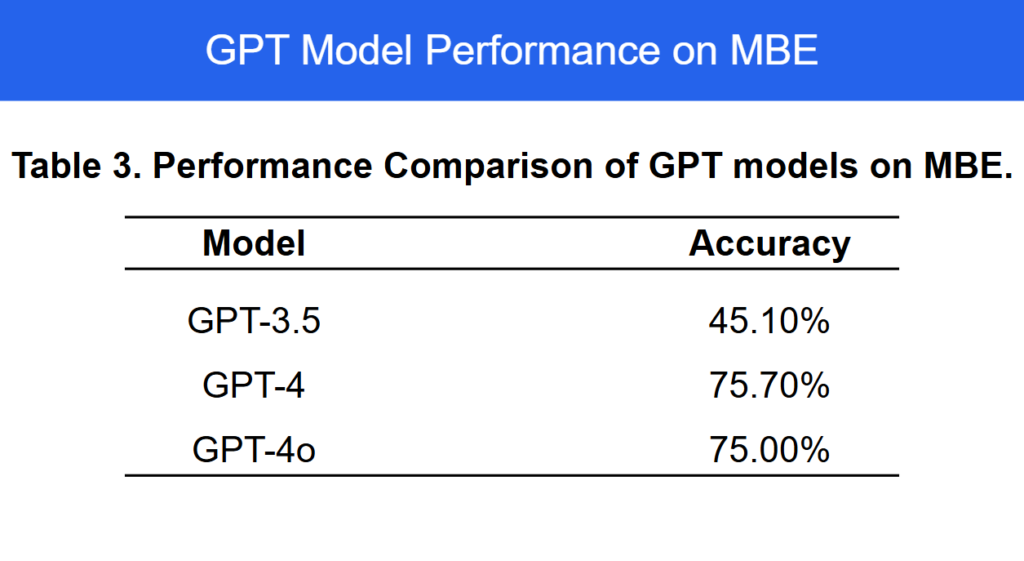

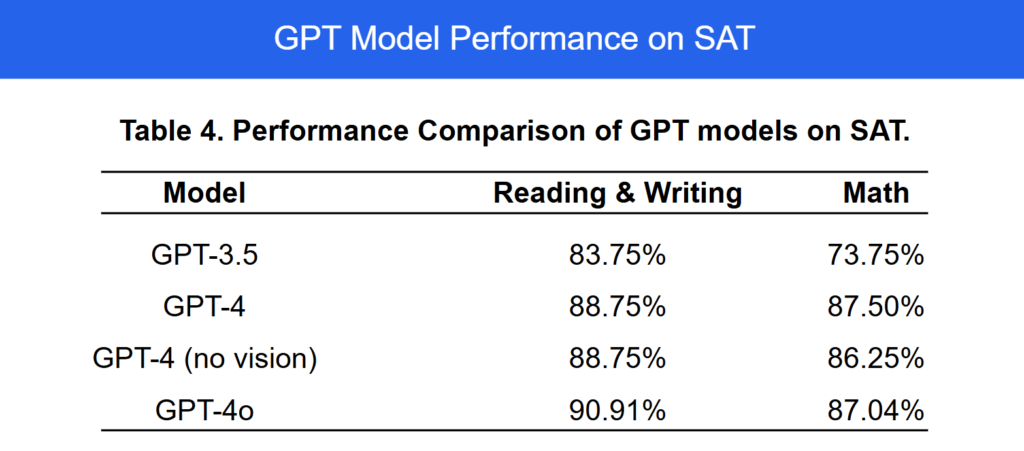

GPT-4 and GPT-4o tie at accuracies of around 75% for the Multistate Bar Exam.

The Multistate Bar Exam, or “MBE,” is a multiple-choice test required for becoming an attorney, which is composed of 200 multiple-choice questions.

Can ChatGPT 4o Analyze Data?

GPT-4o is the best performing model on the SAT with an accuracy of 90.1% in Reading and Writing, while GPT-4 is the best in the Math Section with 87.50%.

GPT-4o has advanced data analysis capabilities that provide insights and generate detailed reports.

It accepts as input any combination of text, audio, image, and video and generates any combination of text, audio, and image outputs. It can accept charts and tables, analyze them, and output its charts as well. (OpenAI, 2024)

It supports 50 languages(opens in a new window) across sign-up and login, user settings, and more. (OpenAI, 2024)

Is GPT-4o Smarter Than GPT-4?

It is difficult to exactly term a model as being ‘smarter’ than another, as some models may be specialized for particular data. Yet the new model seems to be expansive and contain versatile tools:

GPT-4o scores highest on general reasoning, which is also the ‘quality index’ of a model. (OpenAI, 2024).

With faster speeds and vast capabilities, GPT-4o seems to be in a league of its own in terms of intelligence. As we proceed further, we shall see how it fares the best in most of the LLM evals. (OpenAI, 2024).

Based on the available data, GPT-4o seems to be smarter.

What Was The Process of Training GPT-4o?

Unlike earlier models that focused mainly on text, GPT-4o was trained with multiple types of data, including written text, spoken language, and visuals.

The language model’s neural network processes all inputs and outputs, allowing it to handle text, images, and audio smoothly. (OpenAI, 2024).

Is GPT-4o Free?

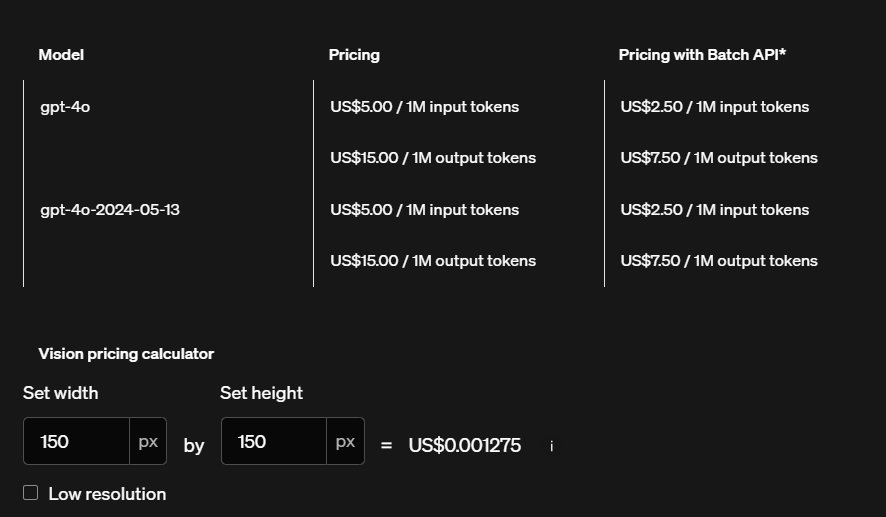

GPT-4o is not free, but 50% cheaper than the previous GPT-4 Turbo for the API. (OpenAI, 2024).

GPT-4o is much more expensive compared to average models on the market, with a price of $7.50 per 1M Tokens (blended 3:1), where:

- GPT-4o Input token price: $5.00 (OpenAI, 2024).

- Output token price: $15.00 per 1M Tokens. (OpenAI, 2024).

As we can see, quality has quite a price here!

The images are also charged depending on their resolution, with low resolution being cheaper.

Performance of GPT Models: A Quick Comparison

GPTs have shown improved performance over time, especially with the latest GPT-4o:

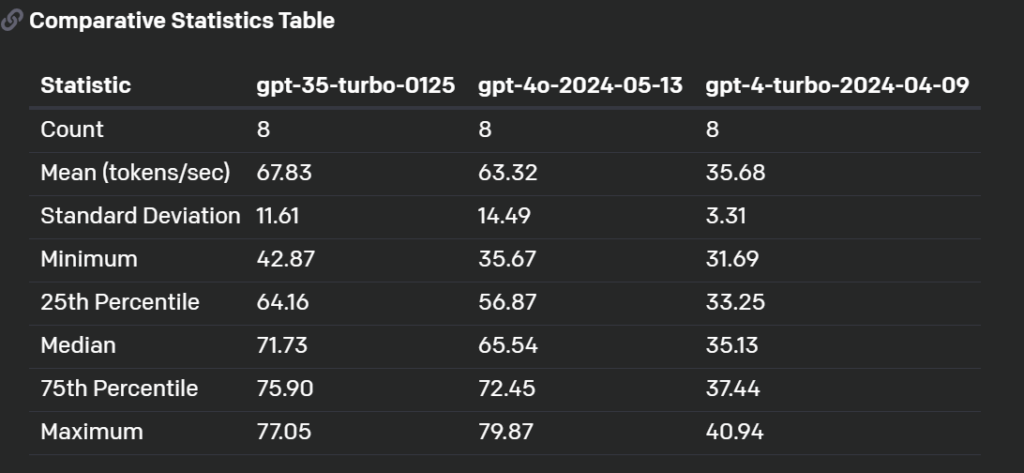

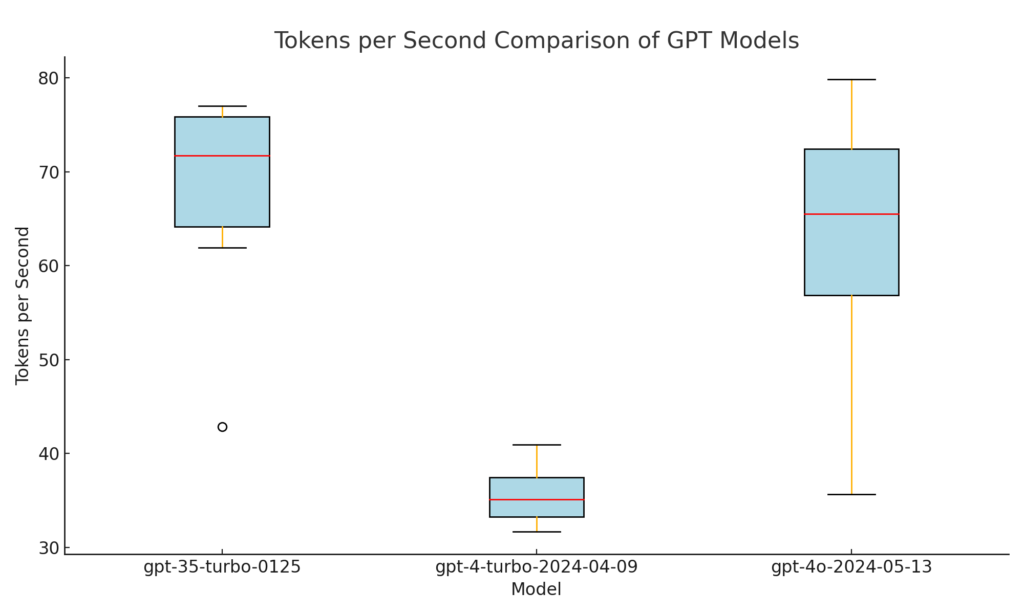

The tokens per second each model can produce serve as a metric for a model’s efficiency and speed. This can be used to compare newer models like GPT-4o with previous iterations.

So, let us dive deep and find out which model is the fastest.

1 . Performance:

The GPT-3.5T(Turbo) model has the highest mean tokens per second (67.83), indicating it is the fastest among the three models tested. This is followed by GPT-4o, with a mean of 63.32 tokens per second. (Community Open AI, 2024)

2. Consistency:

The standard deviation of tokens per second helps understand the variability in performance:

- The GPT-4o model has the lowest standard deviation (3.31), suggesting consistent performance but at a slower rate. (Community Open AI, 2024)

- The GPT-3.5T model has a moderate standard deviation (11.61), indicating relatively consistent performance with high speed. (Community Open AI, 2024)

3. Effective Speed Comparison:

- While GPT-4o is not as fast as GPT-3.5T, it significantly improves over GPT-4T. (Community Open AI, 2024)

- The mean tokens per second of GPT-4o (63.32) is almost double that of GPT-4T, validating that GPT-4o is effectively faster and more efficient compared to its predecessor. (Community Open AI, 2024)

4. Conclusion:

This analysis shows that GPT-3.5T is the fastest for bulk text generation, although GPT-4o significantly improves on previous models quality output. (Community Open AI, 2024)

GPT 4-o Vs. Other Language Models

When comparing GPT-4o to other language models, it’s clear that GPT-4o is a significant leap forward in natural human-computer interaction.

Still, this comes at a high cost, which might affect which model is preferred for large datasets.

Let’s explore the models on the market concerning evals, such as visual perception benchmarks, price, and performance. (Artificial AI, 2024)

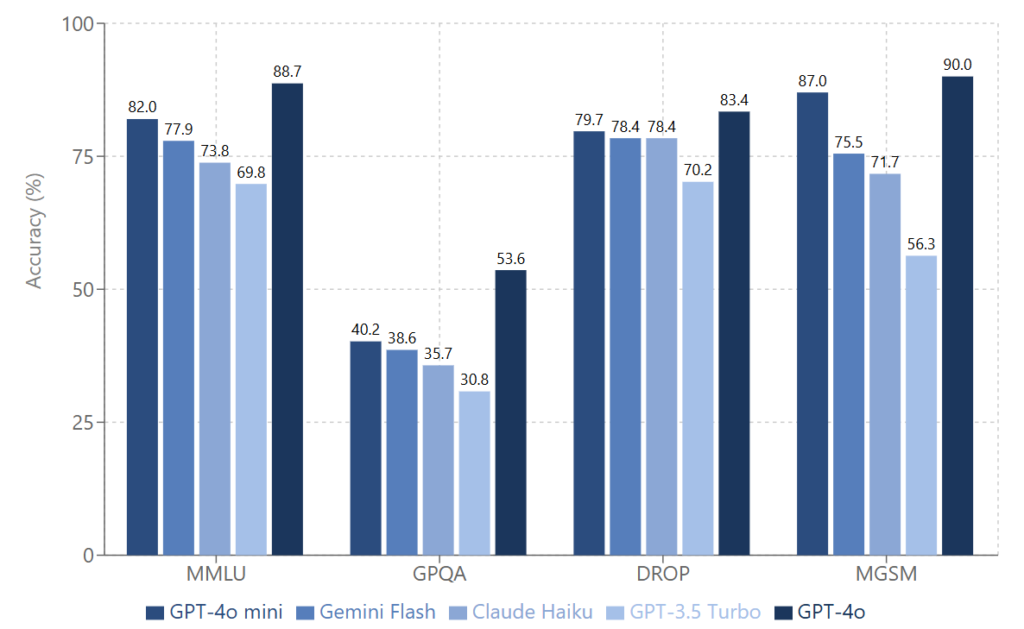

1. Large Language Benchmarks:

- MMLU (Massive Multitask Language Understanding):

GPT-4o performed best at 69.1%. This benchmark tests general knowledge across various academic and professional domains. (Artificial AI, 2024)

- MathVista:

GPT-4o led again with 63.8%. MathVista evaluates mathematical reasoning and problem-solving within a visual context, such as interpreting graphs. ( Artificial AI, 2024)

- AI2D (AI2 Diagrams):

AI2 Diagrams assesses understanding of scientific diagrams, where GPT-4o excelled with 94.2%, and GPT-4T came second with 89.4%. (Artificial AI, 2024)

- ChartQA:

This benchmark tests the ability to answer questions about charts and graphs.

While GPT-4o topped at 85.7%, Gemini 1.5 Pro (81.3%) outperformed GPT-4T (78.1%). (Artificial AI, 2024)

- DocVQA (Document Visual Question Answering):

GPT-4o led with 92.8%. This evaluates answering questions about document images. Notably, Gemini 1.0 Ultra (90.9%) outperformed GPT-4T (87.2%). (Artificial AI, 2024)

- ActivityNet:

GPT-4o performed best at 61.9%. ActivityNet is a metric that is used to capture how a model understands human activities in videos. (Artificial AI, 2024)

2. Price:

GPT-4o is one of the costliest models out there, second to only Claude Opus, at an average of $ 7.5 per million tokens. Llama 3 is the lowest with $ 0.2 per million tokens. (Artificial AI, 2024)

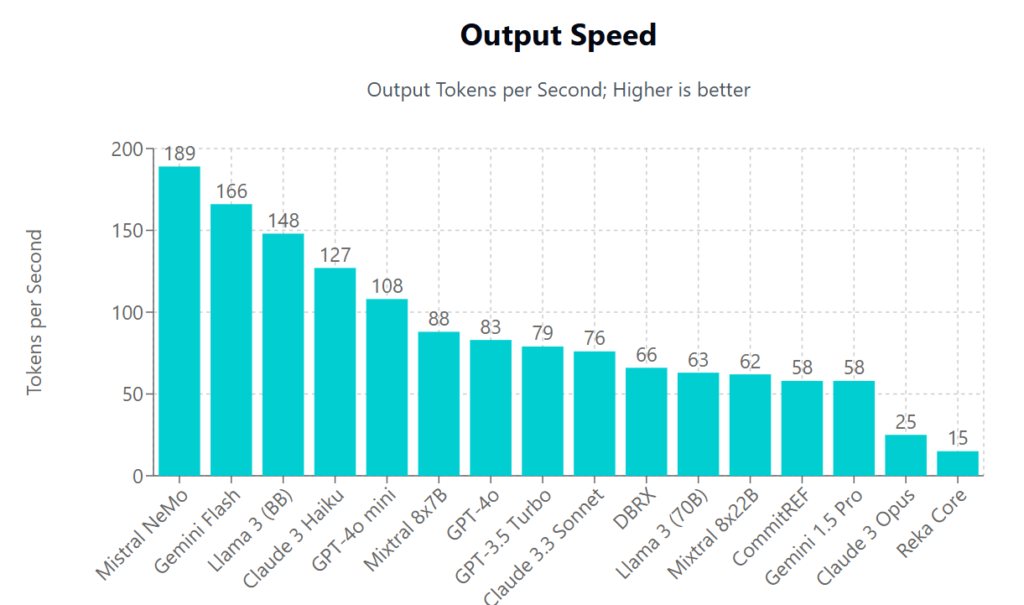

3. Performance:

While GPT-4o surpasses GPT-4 Turbo in terms of speed, there are still models like Gemini 1.5 (166 Output tokens per second) and Llama 3 (148 Output tokens per second) that rival its 83 Output tokens per second. (Artificial AI, 2024)

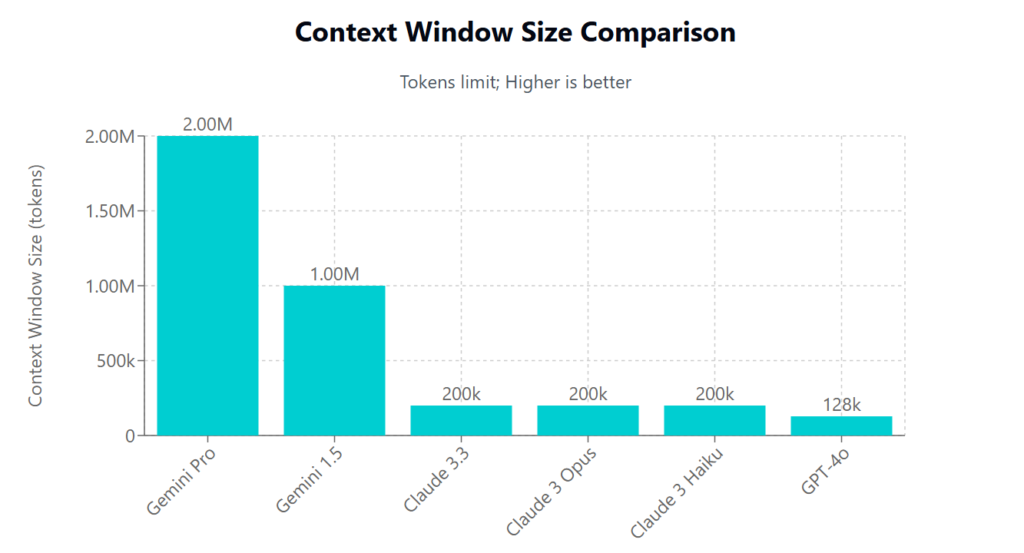

4. Context window:

GPT-4o doesn’t have a significantly high context window concerning other models in the market at 128k, Gemini Pro 1.5 far surpasses it with a context window of 2 million. (Artificial AI, 2024)

6 Quick Facts About GPT-4o Mini

In a bid described by OpenAI’s CEO Sam Altman as “towards intelligence too cheap to meter”, the company released GPT-4o Mini in July.

And indeed as we shall see, the new model is very pocket-friendly:

1. It is priced at 15 cents per million input tokens and 60 cents per million output tokens, an order of magnitude more affordable than previous frontier models and more than 60% cheaper than GPT-3.5 Turbo. (OpenAI, 2024)

GPT-4o is priced at 5 dollars per million input tokens and $15 per million output tokens, which makes the new model 30 times cheaper.

Let’s go through it’s performance benchmarks:

2. GPT-4o mini scores 82% on MMLU (Massive Multitask Language Understanding) and currently outperforms GPT-4 on chat preferences in the LMSYS leaderboard(opens in a new window). (OpenAI, 2024)

3. The model has a context window of 128K tokens, supports up to 16K output tokens per request, and has knowledge up to October 2023. (OpenAI, 2024)

(add a token of GPT4)

4. On MGSM, measuring math reasoning, GPT-4o mini scored 87.0%, while GPT-4 scored 90.2 %. (OpenAI, 2024)

5. GPT-4o mini also shows strong performance on MMMU, a multimodal reasoning eval, scoring 59.4%. GPT-4o scored 69.1% on the same. (OpenAI, 2024)

So the performance of the smaller model doesn’t lag by much,

6.GPT-4o mini has the same safety mitigations built-in as GPT-4o. (OpenAI, 2024)

Final Thoughts

As per OpenAI’s mission statement, they believe this research will eventually lead to artificial general intelligence, a system that can solve human-level problems

The recent audio understanding capabilities of the new model, along with the other GPT4-o statistics you have seen show how immersive it can be.

Voice-mode, memory and real-time web browsing have finally been announced and will be rolled out soon.

GPT-4o is still a tad on the pricier side, so time will tell if future models can mitigate this.

You can read our other reports on the impact of AI here: